The Barbie Hadoop Cluster (Multi-Node Cluster)

I've finally finished the Barbie Hadoop Cluster! It's been several months since I started the project, but after the hiatus I was ready to come back and get it finished.

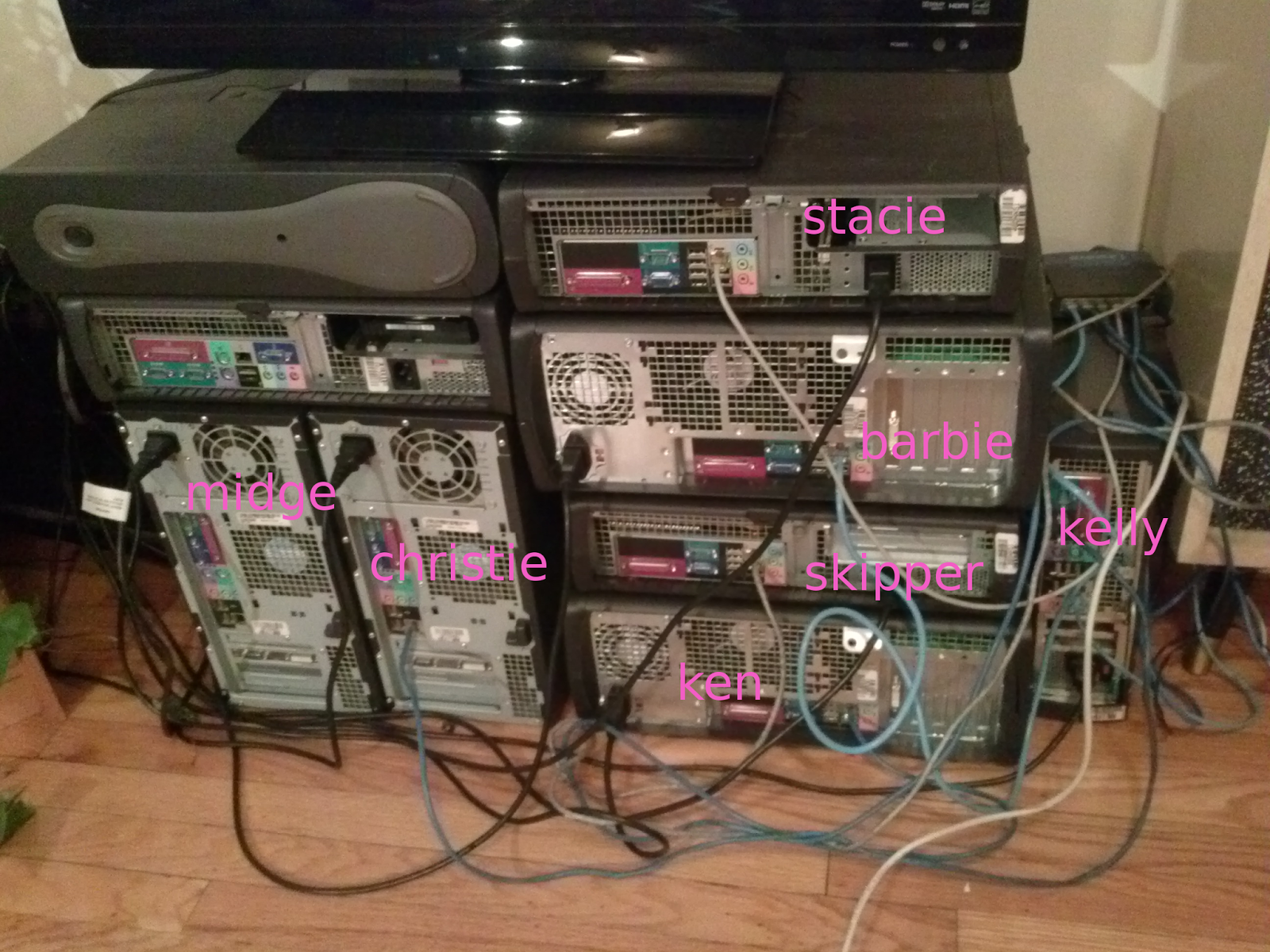

Due to space limitations in our apartment, the stack of 9 Dell PCs is also being used as a TV/monitor stand. They're connected through a switch and all the extra ports on my roouter at the moment. Hopefully at her permanent home, Barbie will look a little more at ease.

Setting Barbie Up

The beginning of the procedure for the setup is basically exactly the same as I described in my previous post, except for a change in the configuration files in the associated github project. Pretty much the entirety of the hadoop setup on each computer was accomplished simply with the folowing command.

sudo git clone https://github.com/lots-of-things/hadoop-compiled.git /usr/local/hadoop

This is followed by changing permissions and adding a few directories to the $PATH by modifiying .bashrc.

sudo chown -R doopy:hadoop /usr/local/hadoop

cat /usr/local/addToBash >> ~/.bashrc

This had to be done on each of the seven working machines. They each had different RAM and hard disk amounts, with skipper and stacie being the best and barbie and ken being the worst.

I changed the /etc/hosts on every machine so that each machine could recognize each other by name.

10.0.0.9 barbie

10.0.0.10 kelly

10.0.0.13 skipper

10.0.0.14 stacie

10.0.0.16 ken

10.0.0.17 christie

10.0.0.18 midge

I decided to make skipper the master, Each machine needs to let the master connect by ssh without a password so I copied Skipper's rsa key made in the initial setup using

ssh-copy-id -i ~/.ssh/id_rsa.pub remote-host

Where remote-host had to be changed to every machine I wanted to connect to. Finally, skipper's slaves file (etc/hadoop/slaves) needed to have each machine added to it (including herself so she would do some work too instead of just running as the NameNode).

barbie

kelly

skipper

stacie

ken

christie

midge

In all honesty...

I screwed this up about a dozen times before getting it to work, but every mistake was something well-documented with solutions on the web. The best trick I learned was to delete the datanode temporary file on each machine whenever I screwed things up. In my setup that would be done like this.

rm -r /usr/local/hadoop/datanode

And Finally

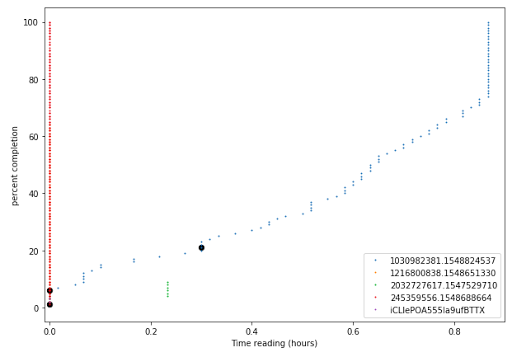

I was finally able to load a few documents into the hdfs and run the wordcount on them across all machines. Overall, I feel extremely satisfied getting this done finally (though I've got a much more sophisticated system at my disposal at the cluster where I work). Now I'm just getting ready to try it out on one of my pet projects!

will stedden

will stedden